The Archive

100,000+ pages of gaming history, indexed so both humans and AI can query primary sources.

The corpus

600+ issues spanning Gamest, Dengeki PlayStation, Dengeki Oh!, Sega Saturn Magazine, PC-Engine Fan, Saturn Fan, and other Japanese gaming publications from 1986 through the mid-2000s. Cover art, strategy guides, developer interviews, hardware ads, launch coverage. The browser shows every issue indexed, filterable by magazine and era. Each cover is a doorway into 100-250 pages of timestamped gaming history.

A searchable archive of 100,000+ Japanese gaming magazine pages. Structured so both humans and AI can query primary sources, with an MCP server exposing the corpus directly to Claude and other tools.

Search and retrieval

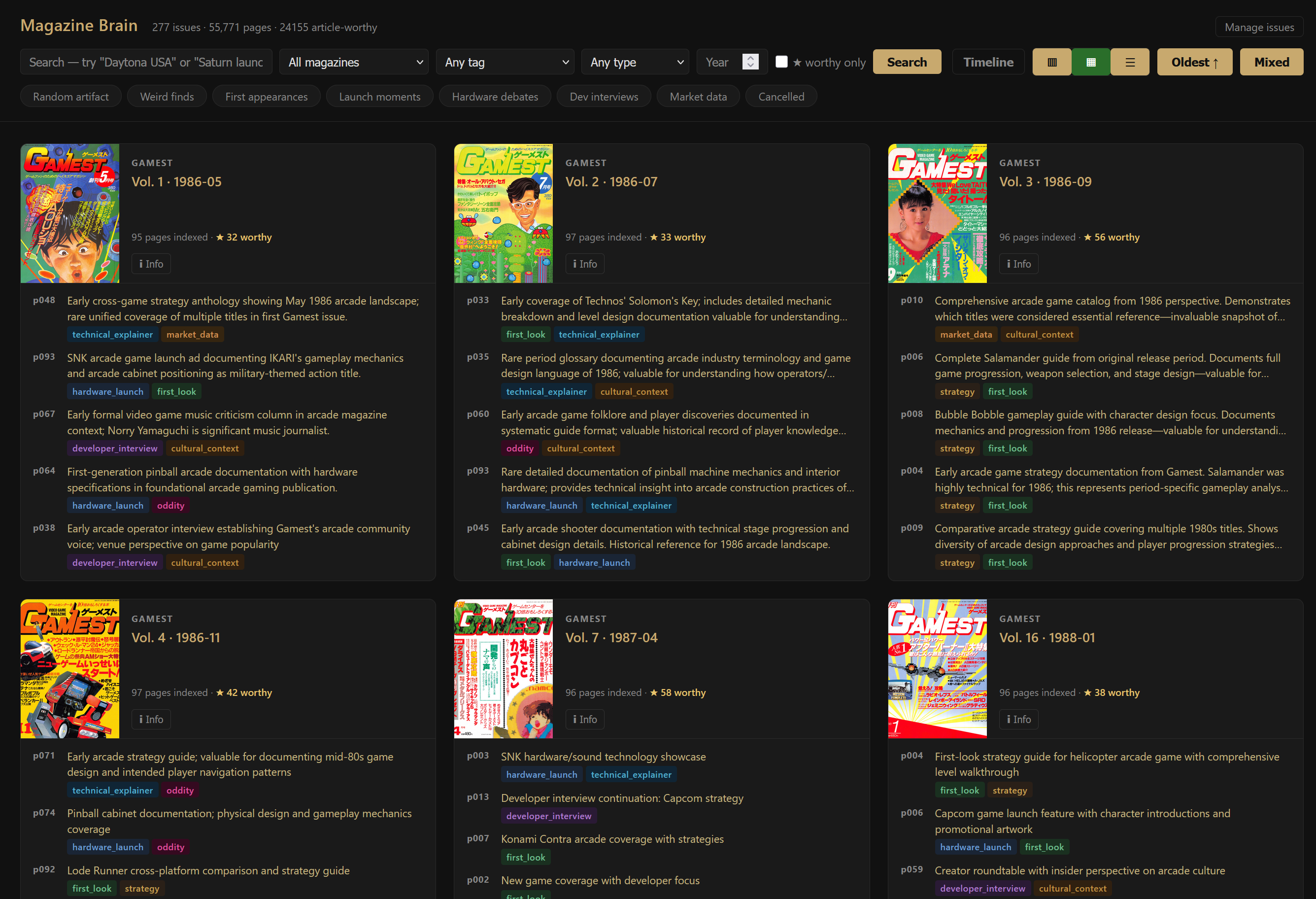

Every page is searchable across 11 extracted fields: games, developers, people, content types, platforms, historical tags, and article-worthiness notes. Results surface the issue, page number, a generated description, and the tags that made it article-worthy. 18,000+ pages flagged article-worthy with notes on why they matter. The same index that serves human research grounds the AI synthesis pipeline and is exposed via an MCP server so Claude (or any MCP client) can query the corpus directly.

The pages

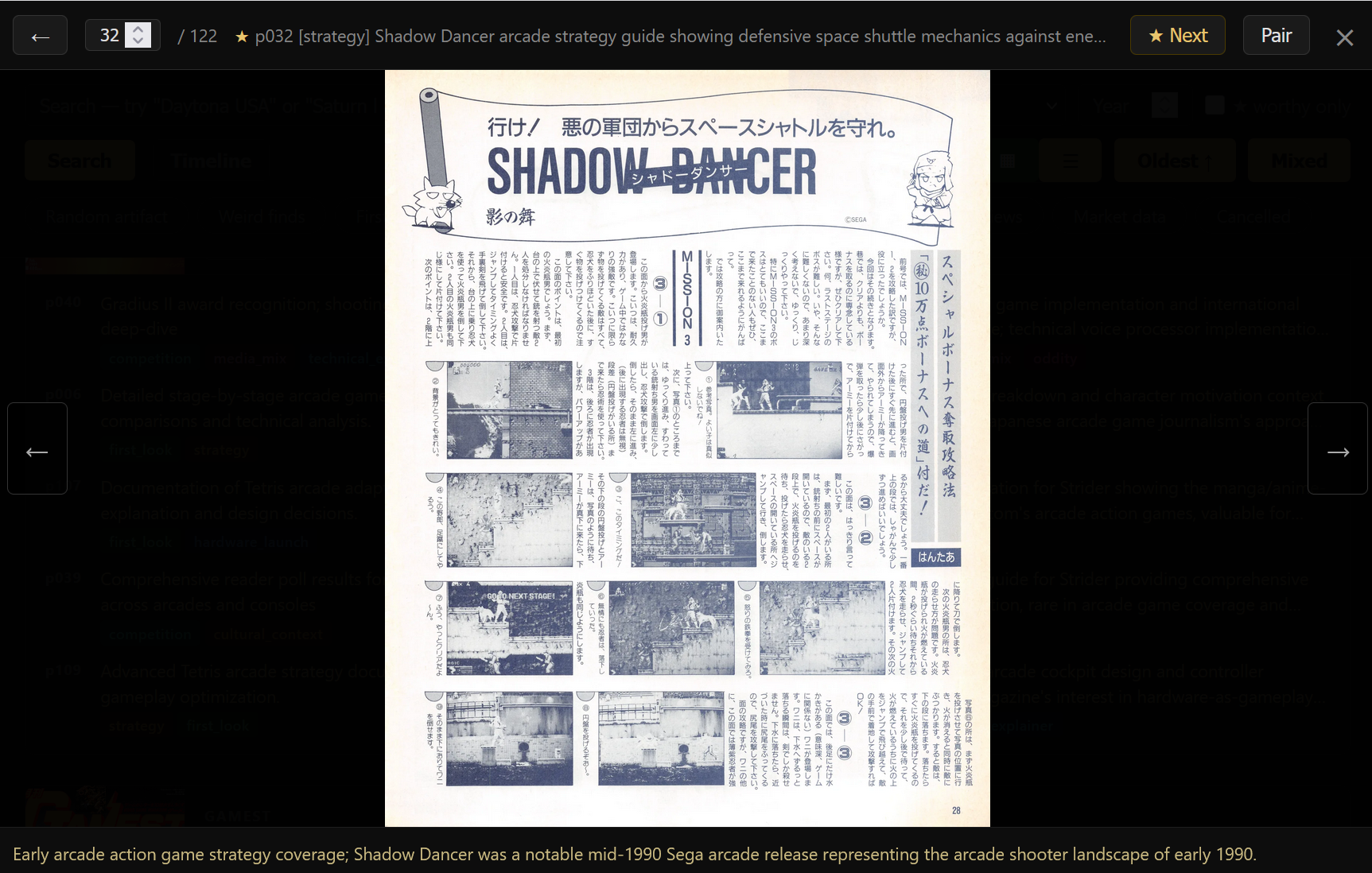

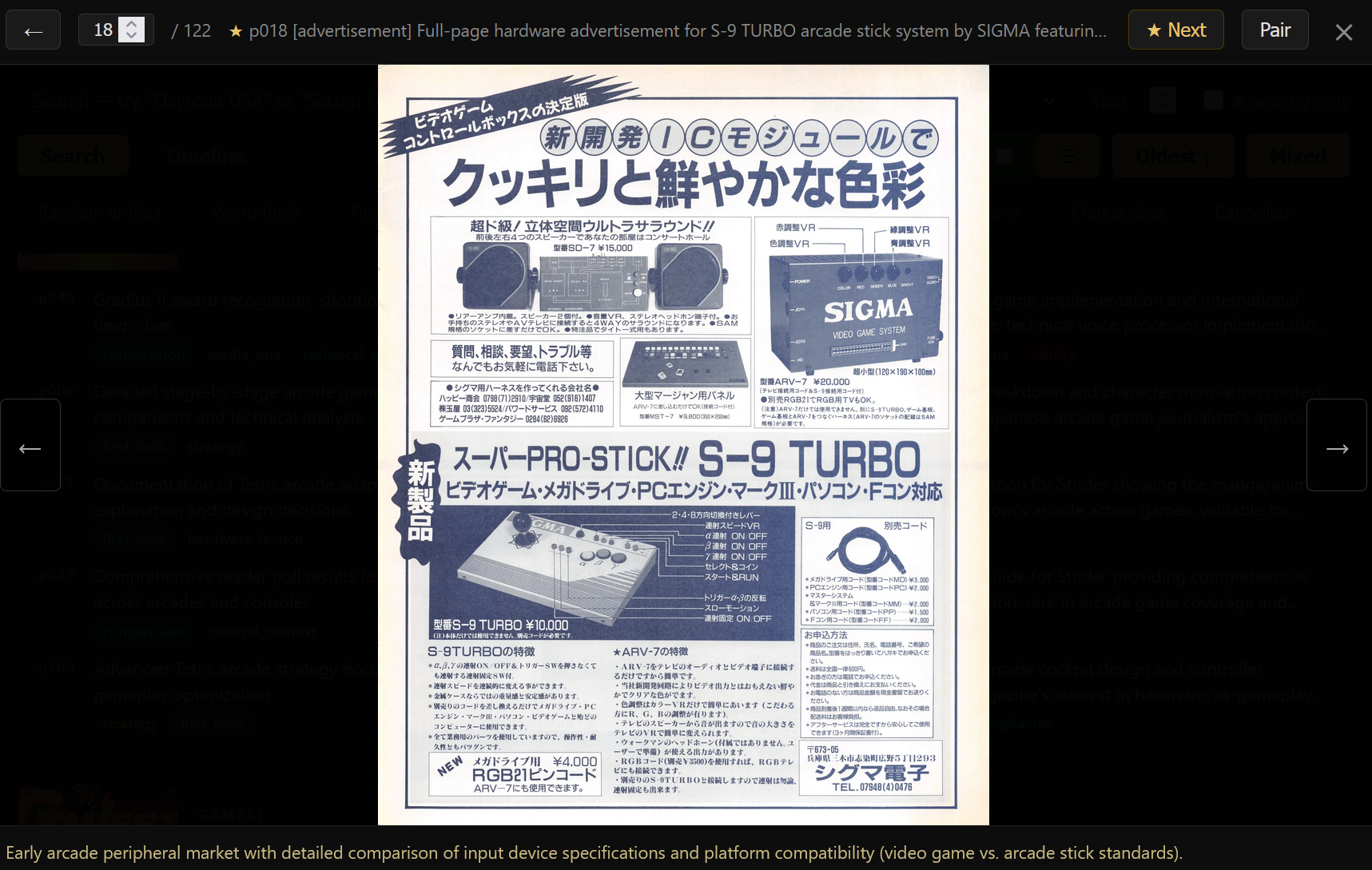

Individual page viewer with the original scan alongside the generated description at the bottom. Strategy guides, hardware ads, developer features; each page carries its own context: what publication, what year, what it documents. Pages can be paired for multi-page spreads.

Ask it anything

Ask your LLM when a certain game released, how many articles covered Virtua Fighter's launch, or to pull every advertisement for a specific title across all indexed magazines. The answers come from the actual historical pages, scanned and indexed, rather than from the web. Your own primary source: 107,000+ pages of contemporary coverage, ads, and editorial from the years these games shipped — connected to your tools via MCP.

Why MCP is the right structure for this

An indexing database is the canonical case for MCP. The corpus is too large to fit in any context window — 107,000+ pages, hundreds of thousands of extracted facts — but every interesting question reduces to a small structured query. MCP turns 'how many Saturn launch ads ran in early 1995' from a context-window problem into a tool call that returns 30 verified rows. The model and the corpus stay independently improvable. Better models can be slotted into the indexing layer without touching the schema or the consumer side. Better consumer tools (Claude Desktop, custom agents, the editorial pipeline) can be built against the same MCP surface without re-ingesting data. Two parts of the system that move on different timelines, and MCP is the seam between them. Results are verified rows from a database, not the model's memory. Hallucination on facts becomes structurally hard: the LLM can rephrase a result, but it can't fabricate an issue number or a developer interview that doesn't exist in the corpus. The schema is the API contract; the 11 extraction fields and 14 historical tags become the surface the model queries against. Permissions and operations live at the tool layer rather than in prompt rules. The MCP server exposes search and read but not write or delete; rate limits and audit logging sit between the model and the data. The same corpus serves multiple model clients without each one needing its own integration or its own copy.

What the pipeline taught

Processing 100,000+ pages exposed failure modes that only appear at scale. Early attempts used a single orchestrator agent to manage an entire issue (250 pages at once). It would silently stop mid-run: not crash, not error, just stop. No trace, no diagnostic, pages simply not indexed. The fix was structural: 14-page batches with explicit completion checks at every boundary. An agent that can only fail on 14 pages is an agent that can be retried without consequence. LLM compliance is the problem that keeps returning. A model given a strict extraction schema, a rules document, and a workflow will follow all three, until it doesn't. Game names get garbled. Required fields get invented from context. Array fields come back as plain strings. A batch that looks clean at 20 pages has hallucinated entries by page 180. The quality system (7 audit types, a 50-character description detector, per-issue average length thresholds) exists because the model cannot be trusted to self-police across an entire magazine run. The most concrete measure of this: more than a hundred issues had to be re-indexed after a quality audit surfaced systematic degradation. The Gamest mass-indexing run produced issues where average description length was 63-68 characters and 38-106 pages per issue had descriptions under 50 characters, not just thin but placeholder-quality outputs that passed the pipeline silently. Dengeki PlayStation issues came back with garbled Japanese game titles: the model had stopped transliterating correctly and was emitting corrupted romaji. Each re-index pass was a session of 1-2 issues, and re-indexing introduced its own failure: batch scripts that created a duplicate magazine entry instead of updating the existing one, requiring a post-session deduplication query before the database was clean. The model strategy has evolved with the model market. The original API-based runs used Haiku; eval surfaced unacceptable hallucination on Japanese stylized text and the entire Haiku-indexed corpus is now being re-run on Gemini Flash 2.5 with key rotation across the free tier. Difficult batches and human-flagged deep dives go to Sonnet vision or Gemini Pro. The schema is model-independent, so each migration is a re-run rather than a rebuild, and the verification suite doubles as the regression test for promoting a new model to default. Nine successive normalization scripts for Gamest alone, each one written to handle a new edge case or failure mode that the previous version missed. That number is an honest record of how many times the pipeline was believed to be finished.

Highlights

- ▶Ingestion pipeline: 107,000+ pages across 600+ issues, concurrent vision processing, resumable batch spans, no silent skips

- ▶Schema: 11-field extraction with 14 historical-tag classifications, 18,000+ article-worthy pages with editorial notes

- ▶Multi-model strategy: Gemini Flash 2.5 (free-tier key rotation) for primary indexing, Sonnet vision and Gemini Pro for deep dives, Haiku-era runs being re-indexed under newer models

- ▶MCP server exposes the corpus directly to Claude and other MCP clients alongside the FTS5 search used by the editorial layer

- ▶Quality gates: 7-type audit system and 5-type drift detection surface degradation without operator intervention